- Agentic AI

- AI in video testing and monitoring

- Video service monitoring

From hardening to catching: how agentic AI is rewriting video service testing

By Yoann Hinard, COO

In January 2026, researchers at Meta published a fascinating paper: Just-in-Time Catching Test Generation at Meta [1]. Their system auto-generates throwaway tests at the exact moment a code change is submitted, runs them once to detect regressions, and discards them. It’s a clever approach for unit testing at internet scale, where executing a test costs milliseconds.

But when your test involves launching an app on a Fire TV Stick, logging into a streaming account, navigating to a content page, and verifying playback, this logic cannot be directly applied. In end-to-end testing, every test execution means using a real device with an installable build and backend of the application.

So what can we actually learn from Meta’s research? More than what I thought at first, because the underlying ideas translate powerfully once you pair them with agentic AI.

Three kinds of tests, three different jobs

Meta’s paper draws a clean distinction between two categories of automated tests, to which we can add a third that is native to device-based QA.

Hardening tests are permanent. They live in the test suite, run on every build, and act as a safety net. If a new release breaks something that used to work, a hardening test catches it, sometimes weeks or months after it was written. In video service testing, this is your sanity suite: the 50 to 100 tests covering launch, login, playback, search, and core navigation that run daily across your device fleet.

Catching tests, as Meta defines them, are ephemeral. They’re generated on the fly when a specific code change is submitted, run once against both the old and new version, and are thrown away. If the test passes on the previous build but fails on the new one, that’s a signal worth investigating. The test never enters the permanent suite.

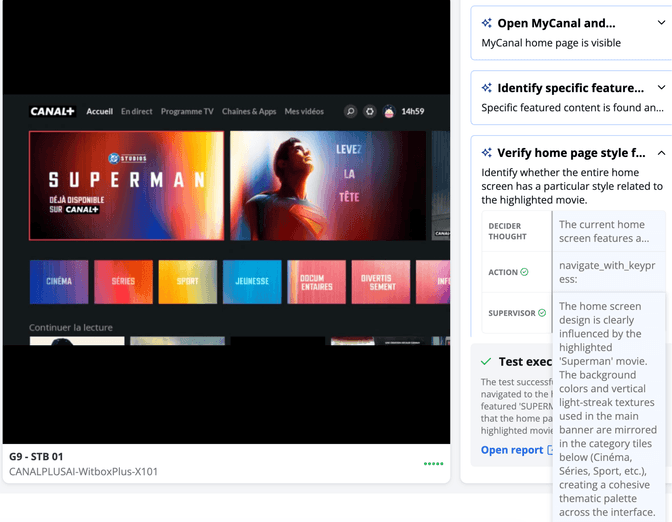

One-off validation tests are something we encounter constantly in video services but that Meta’s framework doesn’t address. When Canal+ skins their entire homepage for a Superman movie launch, or when a broadcaster deploys a special App layout for the Olympics, someone needs to verify it looks right across every device model. These tests will never run again. Writing a full script for them makes no economic sense, yet they need to execute on dozens of devices.

Why catching tests don’t work on devices yet

Meta can afford to generate and discard thousands of tests for every commit and every diff because each execution is essentially free (from a time, space and computing perspective). In end to end testing, you need to build a working app, sideload it onto a physical device, authenticate, navigate to the right screen, and run the validation. The process is very different from what is described in the paper. So crafting a throwaway test that would need to run twice at each commit is impractical (also because every commit would need to generate a working and installable build). The unit test logic cannot be mapped one to one to end to end testing.

But the concept behind catching tests, focusing test effort on what just changed, absolutely applies. When a new app build ships with changes to the DVR recording flow, you don’t need to re-run your entire 500-test suite. You prioritize the DVR-specific tests. That’s diff-awareness adapted to the realities of physical devices.

What changes with agentic AI

Here’s where things get genuinely transformative. The bottleneck in video service testing is usually not “we don’t have enough tests.” It’s that creating, maintaining, and adapting tests requires specialized scripting skills and effort. Skills that content operations teams and marketing teams are not meant to have, and effort that makes scaling difficult for QA teams even when they do have the skills.

Agentic AI removes that bottleneck. Instead of writing a coded test script, you express an intent: “Verify that the homepage displays Superman branding on the Canal+ app.” An AI agent, running on a Witbe Witbox connected to real devices, interprets that intent, navigates the app autonomously, evaluates what it sees on screen, and reports back. No script to write. No script to maintain. No script to throw away.

This unlocks all three categories of testing for teams that were previously locked out:

- For QA and engineering teams, agentic intent replaces brittle, high-maintenance scripts with natural language goals. Hardening suites become easier to build and adapt. Component-deep test sets can be triggered dynamically based on what changed in a release: diff-aware testing without the overhead of scripting every scenario.

- For operations and content teams, this is entirely new territory. A content operations manager can now verify that a promotional campaign renders correctly across 30 device models by describing what they expect to see. A marketing team launching a themed experience can validate it in hours, not days, without filing a QA ticket and waiting in a sprint queue.

- For one-off validations, agentic AI is the only approach that makes economic sense. These tests are too short-lived to script and too important to skip. An intent-driven agent handles them naturally: describe the expected state, run it across the fleet, get results.

From test-driven development to intent-driven testing

Meta’s paper frames the future as “catching JiTTests,” automatically generated code tests that probe specific changes. In video service testing, we see a parallel but different future: intent-driven testing, where the unit of automation is not a script but a goal.

This echoes test-driven development (TDD), but adapted for a world where the “tests” are visual, experiential, and run on physical hardware. You define the expected user journey, derived from an epic, a product requirement, or even a deployment ticket, and the agentic system figures out how to verify it.

The profound shift isn’t only technical. It’s conceptual. Testing stops being something only engineers can do. It becomes something anyone with domain knowledge can drive, because describing what should happen on screen is a skill that content teams, product managers, and operations staff already have.

What comes next

We’re at the beginning of this shift. The foundational elements are in place: AI agents that can navigate real apps on real devices, vision models that can assess what’s on screen, and natural language interfaces that make test creation accessible to non-engineers.

The next step is connecting these capabilities to the systems teams already use. When a deployment ticket lands in Jira, the testing intent could be derived automatically. When a content calendar flags a themed launch event, validation tests could be queued across the device fleet before anyone asks. Testing becomes a reflex of the organization, not a task assigned to a specialized team.

The companies that move fastest will be those that stop thinking about testing as a purely engineering function and start treating it as an organization-wide capability, powered by agents, driven by intent, and executed across every device their customers actually use.

References

[1] Becker, M., Chen, Y., Cochran, N., Ghasemi, P., Gulati, A., Harman, M., Haluza, Z., Honarkhah, M., Robert, H., Liu, J., Liu, W., Thummala, S., Yang, X., Xin, R., & Zeng, S. (2026). Just-in-Time Catching Test Generation at Meta. In Companion Proceedings of the 34th ACM International Conference on the Foundations of Software Engineering (FSE Companion ’26), June 2026, Montreal. ACM. https://arxiv.org/abs/2601.22832