- Smartgate

- AI in video testing and monitoring

- Agentic AI

Introducing the AI assistant in Smartgate: ask your test data anything

By Noemie Galabru, CMO

A test fails on a 2018 Samsung TV at 2:14 a.m. By the time the on-call engineer pulls up Smartgate, the failure is already labeled, already compared against the successful runs from earlier in the week, already paired with a full video recording cued to the exact frame where playback broke. The data is there. The question is how fast the team can turn it into an answer.

That's where the new AI assistant in Smartgate comes in.

What Smartgate already does

Smartgate is Witbe's observability and reporting platform. Every test result coming out of the Remote Eye Controller (REC) and the Agentic SDK flows into it: pass and fail counts, device health, daily trends, error categories, and full video recordings linked to the timestamp where each issue occurred.

Behind the views, the platform is doing real analytical work. Every test result is automatically labeled and categorized. Root causes are surfaced by comparing failing executions against successful ones for the same scenario, on the same device family, under the same conditions. Degradation trends are detected across devices, ISPs, and regions, so a slow regression on one operator network doesn't stay buried inside a green-looking week.

This is what Smartgate has been doing in production for years: turning real-device test results into measurable, watchable proof.

Smartgate AI lets you interact with your data in plain English - watch the video below to see it in action.

This video requires additional cookies

This YouTube video uses third-party cookies. To watch it, please update your cookie preferences.

A new way in: the AI assistant

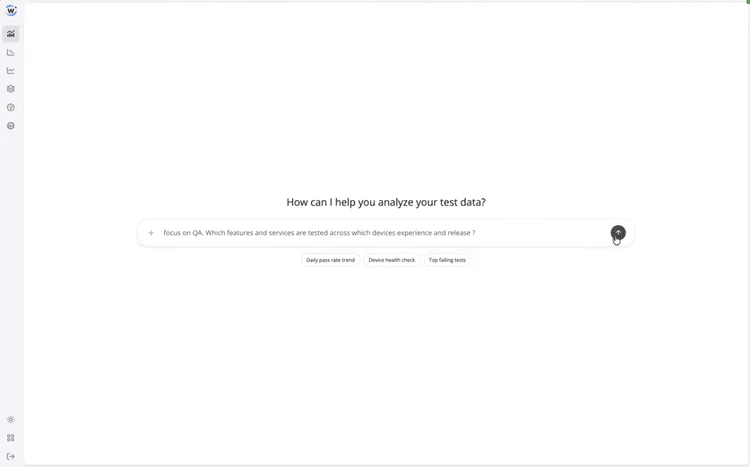

What's new is the layer above. Smartgate now ships with a conversational AI assistant: a chat interface that lets anyone on the team ask questions of the same data, in plain language. Example prompts:

- "Did pass rates drop on Smart TVs in France last night?”

- “Which devices are degrading week over week?”

- “Show me failing executions of the VOD playback test on Roku.”

The assistant works on top of what Smartgate already produces. It uses the auto-labels, the pass-fail comparisons, the trend detection, and the video-linked evidence, and shapes them into a direct answer. Hybrid by design: the analytics underneath stay deterministic; the conversation on top is where the AI does its work. Every answer points back to the same dashboards, the same drill-downs, and the same video proof that Smartgate has always offered.

Same data, more hands on it

The senior engineer who reads runs and writes the morning summary still has Smartgate exactly as they always did: the dashboards, the views, the drill-downs. What the assistant adds is a new way for everyone else to reach the data without learning how to navigate it first.

A QA lead can ask about the Roku being suddenly red. A support manager can ask which markets had the worst playback evening last night. An exec can ask whether the latest release is degrading anything against the previous one; and get a grounded answer back, with a video to watch, in the same flow.

That widens the audience for Smartgate without changing what it does underneath. The proof remains the same: real devices, real conditions, results comparable across runs.

From dashboards to dialogue

Test data has always been Smartgate's job. What the AI assistant adds is a new way to reach it — by conversation, instead of navigation. The numbers underneath are the same ones Witbe customers already trust. What's new is that asking a question of them no longer requires knowing where to click first.

References